drAwIng

Image-generating AI’s are some of the most interesting projects coming out of AI labs. These include OpenAI’s Dall-e-2, Google’s Imagen, and Stability AI’s Stable Fusion. I’ll be covering what they are, how they work, and how you can get access to using them!

Background

What are these models?

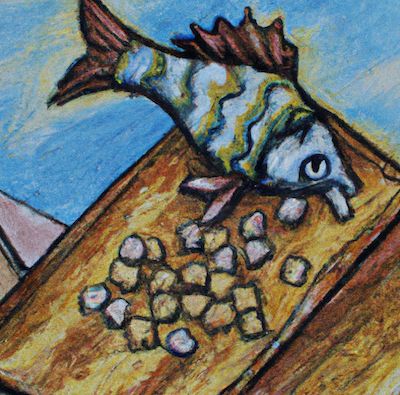

These models take in text and produce a completely computer-generated image. There are plenty of examples but here is an example below. I typed in “A fish standing on a roof eating cereal” and this came up:

Here is another example from last night "cubist style of grandma being pushed in a wheelchair":

It’s mind-blowing the capabilities of this technology. Companies like jenkins.ai are using this technology to change how creatives work. Images for various sites and products can be generated. There are also products like This Person Doesn’t Exist. They use a similar model to generate random human faces that don’t exist. Many people will use this for generating models for ads and they don’t have to pay for rights to the image.

People are already taking credit for what these models can do. One person won an art competition using a generated image. I also imagine there are fronting artists on Fivver profiting from this model.

The reaches of this are wide and many are scared this will replace humans. In my opinion, it will just change the nature of many creatives’ jobs. It’s been mentioned that there could be a future where this is someone’s job. To be an expert at “image generation prompt writing”. Knowing how to communicate well with the AI to get a quality image is definitely a skill. It’s similar to being a good “googler” or even a good data miner.

How do they work?

I’ll keep things very high level for this. The model consists of two parts. The first is the part that maps text to images. That is called CLIP. CLIP stands for Contrastive Language-Image Pre-training. The model is given a list of captions and it is trained to pick the right one. This gives the model a richer understanding of an image even if there aren’t many examples to be trained on. This rich vector graph becomes very useful when combined with the second part below.

The second part is the diffusion model. This works by taking an image and scrambling a few pixels. Then the model is trained to reassemble the pixels so it gets back to the old image. The model learns how to take a grid of pixels and go from some randomness to little to no randomness. Then it’s trained on a grid of random pixels and is trained to generate some viable image. The model now knows how to generate an image from randomness.

The last part is to fuse these two together. CLIP maps the text to what an image should look like. The model is given a random grid of pixels. Then the model is trained to build an image that the CLIP model "approves of". More can be read here.

How To Get Access?

The best open access model is Dall-E-2. The issue is that you need to join the waitlist. For me, this took a few months to get access (though I believe wait times are shorter now). The other bad part is that you’re limited to 15 images a month. Not great if you want to build a project/product around it. I assume this will change soon but the limitation is quite annoying.

Luckily there is an open source model we can use. This is Stability AI’s Stable Fusion mentioned above. It’s an organization that is funded to do AI research for the general public. This model can be run on your very own computer. The code and how to run it can be found on their GitHub. I was going to write a whole post about how to set this up, but being the lazy person I am, I found a better way.

The founder of rep.it created a repl that allows you to use the open source model for free! This requires no time or creds to use (not sure if there is rate limiting). That can be found here.

Note: I’ve found the results of Stable Diffusion are not as high quality as Dall-E-2.

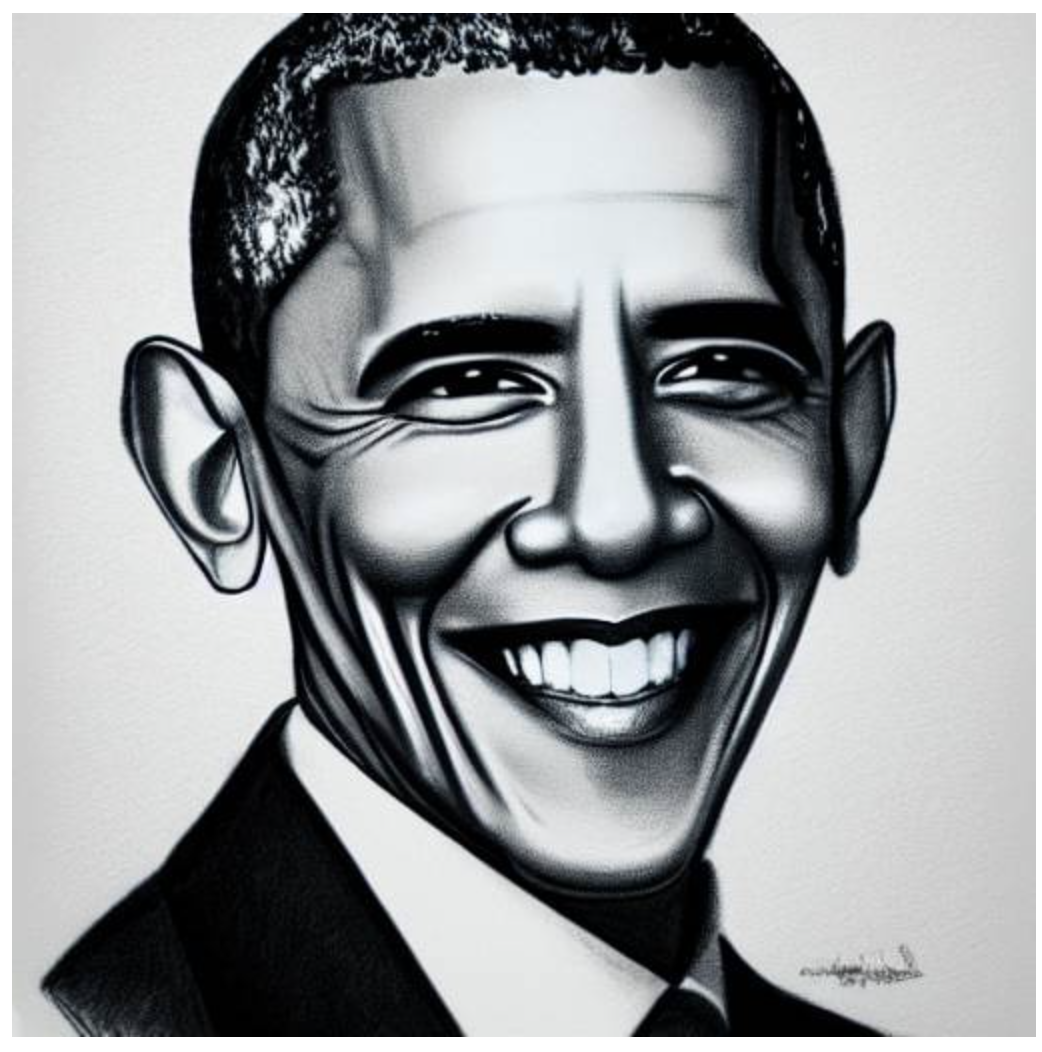

Here are some examples of what I was able to produce with it.

“picasso version of a cat playing violin”

“3d model of a castle on a mountain with waterfalls”

“pencil sketch of barack obama”

Happy creating! Let me know if you make anything!